- Blog

- Iptv m3u sports uk

- Microsoft excel spreadsheet templates

- Save wizard license key 2018

- Excel project planner template free

- Download microsoft word office 2013 free

- Bitlocker windows 8-1 download

- Adobe air 3-1 download

- Party planners

- Sac state graphic design student portfolio

- Duplicate title california dmv

- Rawtherapee presets

- Festo fluidsim examples

- Monthly expenses excel spreadsheet template

- On and off porn

- What keno numbers come up the most

- Foxit reader free download filehippo

- Lhc group itrain federatedservices

- Cubase 64 bits portable

- Yugioh duelist of the roses pc

- Ms subbulakshmi tamil suprabhatam free download mp3

- 1968 schwinn stingray serial number

- Susan mills santa monica

- Cursive handwriting fonts for tattoos

- Flume apache

- Party planners chicago suburbs

- Good utilities to monitor pc temps

- Lume body wash commercial 2021

- Battleships online free game

- Make an appointment at quest diagnostics

- Checklists for moving

- Create project planner excel

- Malwarebytes free download update program version

- Microsoft word repeating section content control number

- Start movie production company

- Nickelodeon kindergarten learning games

- Weight loss tracker template free

- Macos monterey wallpaper

- 2010 ford f150 stereo upgrade

- The fisherman bible study guide elijah

- Innovative brochure templates design free download

- Gta 6 game videos

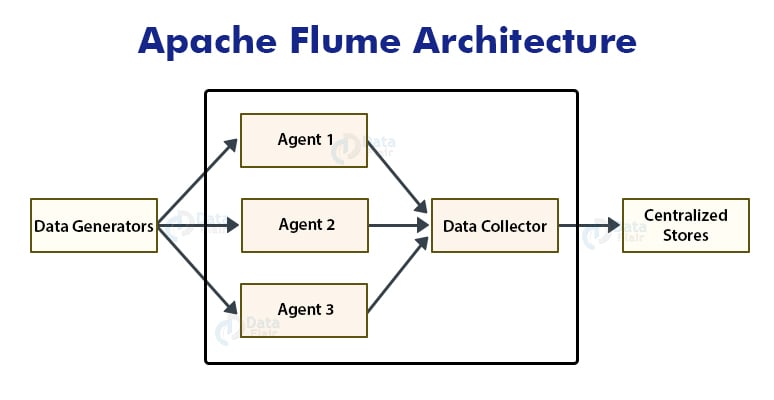

Now, we have a challenge here, how do we create large size files with the small number from of streaming data. If we have the small amount of data then we are not taking the advantage of HDFS which has the cluster of commodity server. If there is a number of small files then we are creating an extra overhead to NameNode to keep the metadata information for more number of files. The metadata about these data blocks is written into one high-end server which we call as NameNode. It is important to note that HDFS stores the data in the form of files which is distributed across the cluster of commodity server in terms of the block of data say 64MB, 128 MB chunk etc. Secondly, we need to devise a mechanism to buffer these streaming data. For this, firstly we need to integrate my application with JAVA API’s of HDFS data store. Let us suppose we want to write this data to HDFS. The moment this data gets generated, it needs to be sent to the data store. This data needs to be stored as the event occurs. The user clicking on a notification produces data.We have the number of events that are producing data for example: Let us suppose we have an application that sends “user notifications” for a professional network and then tracks metrics such as the number of views or number of clicks on user notifications. But there are problems when you are streaming from an application or bulk transferring the data from tables in RDBMS. We can directly use these API’s to write the data to HDFS, HBase, Cassandra etc.

Therefore, we have JAVA API’s for HDFS, HBASE, Cassandra etc. Normally, Hadoop Ecosystem exposes JAVA API’s to write data into HDFS or different data stores. The important thing to understand is that how do we get the RDBMS or Application data into HDFS. Let us suppose we have a requirement to port the archived RDBMS data to HDFS datastore. This could be OracleDB, MySQL, IBM DB2, SQL Server etc. The other kind of data source is a traditional RDBMS data. Therefore, we need a datastore to store this kind of data. The other advantage of these data stores is that you can use one single system for both transactional and analytical data.īut the main question here is where does this data come from? General, the data come from two kinds of sources.Įither it is an application that is producing the data such as user notifications, weblogs, sales etc produces a large amount of data on regular basis. they use the cluster of machines to scale them linearly. Advantages of Distributed Data StoresĪll of them are open source technologies, but the key question to think about is, why are these data stores so popular?Īll of these data stores are distributed in nature i.e. Among them, the most popular ones are HDFS, Hive, HBase, MongoDB, Cassandra. There are quite a few number of data stores in the market today.

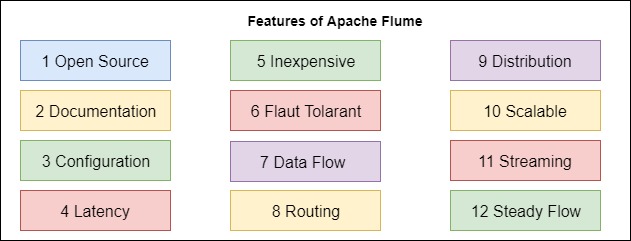

This tutorial outlines the need and importance of Apache Flume and Apache Sqoop.

- Blog

- Iptv m3u sports uk

- Microsoft excel spreadsheet templates

- Save wizard license key 2018

- Excel project planner template free

- Download microsoft word office 2013 free

- Bitlocker windows 8-1 download

- Adobe air 3-1 download

- Party planners

- Sac state graphic design student portfolio

- Duplicate title california dmv

- Rawtherapee presets

- Festo fluidsim examples

- Monthly expenses excel spreadsheet template

- On and off porn

- What keno numbers come up the most

- Foxit reader free download filehippo

- Lhc group itrain federatedservices

- Cubase 64 bits portable

- Yugioh duelist of the roses pc

- Ms subbulakshmi tamil suprabhatam free download mp3

- 1968 schwinn stingray serial number

- Susan mills santa monica

- Cursive handwriting fonts for tattoos

- Flume apache

- Party planners chicago suburbs

- Good utilities to monitor pc temps

- Lume body wash commercial 2021

- Battleships online free game

- Make an appointment at quest diagnostics

- Checklists for moving

- Create project planner excel

- Malwarebytes free download update program version

- Microsoft word repeating section content control number

- Start movie production company

- Nickelodeon kindergarten learning games

- Weight loss tracker template free

- Macos monterey wallpaper

- 2010 ford f150 stereo upgrade

- The fisherman bible study guide elijah

- Innovative brochure templates design free download

- Gta 6 game videos